The contemporary mobile landscape is undergoing a fundamental re-architecture, shifting from static, reactive applications to autonomous, intelligent ecosystems. This evolution is not merely an additive feature set but a complete reimagining of how software engages with human intent and environmental context. For a strategic technology partner like The Softix, the objective is to move beyond simple digital presence toward engineering growth through precision-driven innovation. As of 2026, the integration of Artificial Intelligence (AI) has become the cornerstone of competitive differentiation, with projections indicating that 40% of enterprise applications will feature task-specific AI agents by the end of the year. This report provides an exhaustive analysis of the technical, strategic, and economic frameworks required to integrate advanced intelligence into mobile platforms, synthesizing current market data with forward-looking architectural principles.

The Strategic Rationale for AI-Native Engineering

The transition to AI-native mobile applications is driven by an irreversible shift in user expectations and the availability of specialized hardware. Mobile users no longer seek tools; they seek partners systems that can anticipate needs, automate routine decisions, and provide hyper-personalized interactions. Competitive analysis of leading development frameworks reveals that those who adopt AI early achieve a significant strategic edge, often realizing a 250% to 400% return on investment within the first 24 months of launch.

The scale of this shift is reflected in global download statistics and revenue projections. In 2024, apps featuring AI capabilities were downloaded 17 billion times, accounting for 13% of all global downloads. Generative AI (GenAI) applications specifically generated $1.3 billion in in-app purchase revenue in the same period, reflecting a 180% year-over-year increase. This surge is supported by the rapid maturation of 5G networks, which are projected to reach 5.9 billion subscriptions by 2027, providing the 100x speed increase and 1ms latency required for sophisticated cloud-to-edge orchestration.

Competitive Benchmarking and Value Proposition

Analysis of competitor strategies, such as those promoted by Arpatech and XAutonomous, highlights a common emphasis on hyper-personalization and technical roadmaps. However, the 2026 landscape demands a deeper integration that moves beyond “bolted-on” chatbots toward “agentic” workflowssystems that reason across tasks and systems. The Softix differentiates itself by framing AI as a strategic consultant rather than a mere utility, focusing on scalability and agile engineering that transforms ideas into high-performance digital realities.

A Technical Roadmap for Intelligent Integration

Integrating AI into mobile application development is a multi-phase engineering process that begins with strategic alignment and concludes with continuous post-deployment refinement. The process must be governed by a “Secure by Design” philosophy, ensuring that data privacy and system resilience are baked into the architecture from inception.

Phase 1: Strategic Discovery and Goal Alignment

The initial phase focuses on identifying high-impact use cases that align with the core business objectives. For The Softix, this means asking how technology can command growth and convert users through precision. Common goals include enhancing user retention through predictive analytics, reducing operational costs with automated support, or improving security through biometric and behavioral analysis. Measurable Key Performance Indicators (KPIs) must be established early to track user retention, conversion uplift, and operational efficiency.

Phase 2: Data Engineering and Governance

Data is the foundation of any intelligent system, and its preparation typically accounts for 25% to 40% of the total project budget. This phase involves collecting, cleaning, and labeling high-quality data while ensuring strict compliance with privacy regulations like GDPR, HIPAA, and SOC 2. Developers must account for data accuracy and the removal of noise, as poor data quality is cited as the primary reason for 70% to 85% of AI project failures.

Phase 3: Architectural Selection (Cloud, Edge, and Hybrid)

A critical architectural decision involves the placement of AI inference. Cloud AI offers the massive computational power required for frontier reasoning models, while On-Device (Edge) AI provides the low latency and privacy essential for real-time applications. Most 2026 enterprise applications adopt a hybrid approach, utilizing quantized on-device models for fast local tasks and cloud-based models for complex multi-modal processing.

Phase 4: Tech Stack and Framework Integration

The selection of the technology stack determines the application’s scalability and hardware compatibility. For cross-platform development, frameworks like Flutter and React Native dominate the frontend, while Django and Laravel are preferred for robust backend AI integration. Specialized AI tools like TensorFlow Lite, Core ML (for iOS), and ML Kit (for Android) are used to embed models directly into the mobile environment.

| Platform | Recommended Framework | Key Advantage |

| iOS (Native) | Core ML / Swift | Optimized for Apple Silicon |

| Android (Native) | ML Kit / Kotlin | Seamless Google ecosystem |

| Cross-Platform | Flutter / React Native | Single codebase, rapid MVP |

| Agent Orchestration | LangGraph / CrewAI | Complex multi-agent logic |

| Data Search | MongoDB Atlas | Integrated vector search |

Phase 5: Building and Training Models

For unique business needs, custom models are trained using split data sets training and testing to ensure real-world reliability. When using Generative AI, prompt engineering becomes a financially relevant decision, as optimized prompting strategies can reduce inference costs by 25% to 35%. Organizations are increasingly fine-tuning foundation models rather than training from scratch, which provides high capability at a significantly lower cost.

Phase 6: Deployment, Monitoring, and Retraining

Post-launch, the system enters a continuous improvement cycle. Feedback loops are used to monitor the accuracy of predictions and retrain models with fresh data. Maintenance and monitoring typically add 15% to 25% of the original build cost annually, a factor that must be included in the budget from day one.

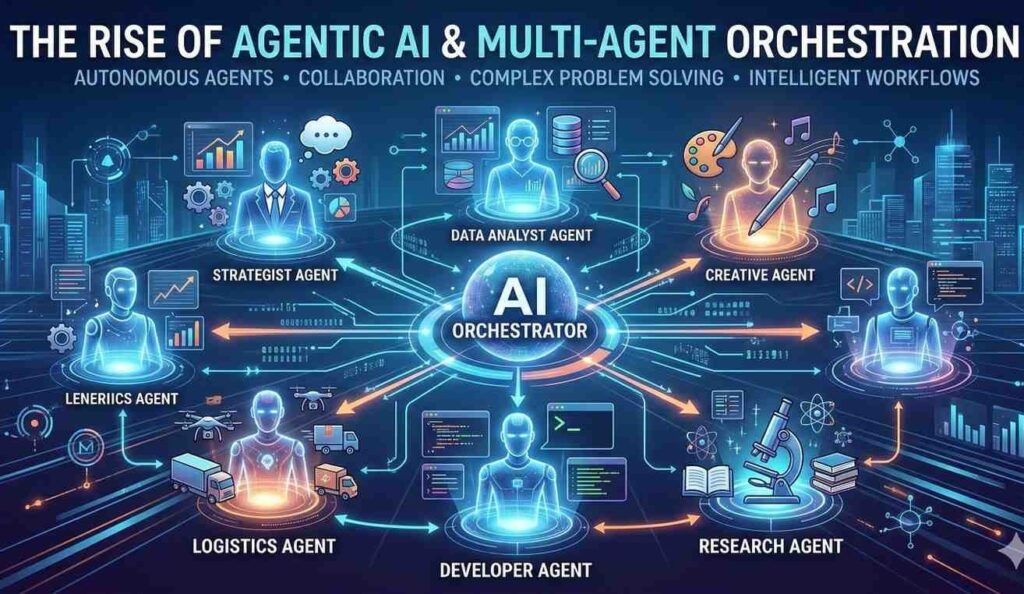

The Rise of Agentic AI and Multi-Agent Orchestration

In 2026, the industry has shifted from AI as a passive assistant to AI as an active operator. Agentic AI refers to software entities capable of pursuing broader objectives through long-horizon planning and contextual decision-making. This move represents the transition from rule-based automation to goal-driven autonomy.

Multi-Agent Systems vs. Monolithic Agents

Early AI implementations often relied on a single “do-everything” agent, which created the AI equivalent of a monolithic architecture difficult to debug and impossible to scale. Modern enterprise architectures favor multi-agent orchestration, where specialized agents focus on domain-specific tasks and coordinate through a central layer. For example, a healthcare app might deploy a “retrieval agent” to gather patient records, a “diagnostic agent” to analyze images, and a “governance agent” to ensure HIPAA compliance.

| Feature | Single-Agent Architecture | Multi-Agent Orchestration |

| Complexity | Low (Focused scope) | High (Modular/specialized) |

| Scalability | Limited | High (Enterprise use cases) |

| Resilience | Single point of failure | High (Fault tolerance) |

| Logic | Linear execution | Delegation and negotiation |

| Best For | Simple chatbots / Utility | Complex workflows / ERP |

Frameworks for Agentic Development

The deployment of agentic systems is supported by sophisticated frameworks that provide the preset architecture for task decomposition and communication. Frameworks like LangGraph enable developers to build custom, controlled processes with transparency, while Semantic Kernel serves as the middleware connecting AI functionality to existing enterprise applications. These tools allow for “self-correction,” where the agent evaluates the outcome of its previous actions and modifies its plan for future attempts, a critical capability for operating in dynamic environments.

Architectural Optimization: From Cloud to Edge

The decision of where to run an AI model has profound implications for performance, privacy, and operating expenses. On-device AI market value is forecast to expand ten-fold over the next decade as mobile silicon becomes more capable.

On-Device Inference and Performance

Running AI locally on the mobile CPU, GPU, or Neural Engine eliminates network round-trips, often cutting response times in half compared to cloud queries. For real-time applications like AR overlays or instant voice translation, on-device inference can generate tokens in under 20ms, ensuring the experience remains fluid. Furthermore, on-device models are always available, providing critical functionality in areas with poor or zero connectivity.

Model Compression and Capability

To fit within the memory and power constraints of a mobile device, large models undergo significant optimization. Techniques like SmoothQuant migrate quantization difficulty from activations to weights, enabling 8-bit quantization with minimal loss. Pruning methods like SparseGPT can remove 50% of model weights in a single shot without retraining, significantly reducing the storage footprint. Distilled reasoning models, such as MobileLLM-R1, demonstrate 2-5x better performance on reasoning benchmarks compared to models twice their size running on the same hardware.

Cloud AI and Infinite Scalability

Despite the rise of edge computing, cloud AI remains essential for tasks requiring frontier reasoning or access to massive, real-time datasets. Cloud platforms like Azure, AWS, and Google Cloud offer orchestration tools that manage multi-agent workflows across diverse systems. Cloud models can be updated centrally, ensuring that all users immediately benefit from the latest improvements and knowledge bases without needing a client-side update.

The UI/UX Revolution: AI-First Design Patterns

In 2026, user interface and experience design (UI/UX) have entered a new phase where intelligence is the foundation, not an enhancement. Products are increasingly personalized, predictive, and dynamic, shifting from creating static screens to shaping AI behaviors and human-AI relationships.

Generative UI and Temporal UX

Traditional design conventions, such as light-mode defaults and static content rendering, are being replaced by AI-native standards. “Streaming text,” where words appear character-by-character as they are generated, transforms a 4-second latency into an active experience, keeping users engaged while the model is still processing. Skeleton screens with shimmer animations have replaced generic spinners, providing immediate visual context that reduces perceived wait times by 60%.

Confidence Indicators and Explainable UX

A significant new pattern is the “confidence indicator,” a visual element that communicates how certain the AI is about its output. This builds trust, especially in high-stakes fields like medicine or finance. Transparent system behavior, or “Explainable UX,” answers the user’s “why” by providing contextual explanations for suggestions and automated actions.

| Design Dimension | 2023 Convention | 2026 AI-First Standard |

| Content Display | Static database render | Real-time streaming / Typewriter |

| Loading State | Spinner / Progress bar | Skeleton screens + Shimmer |

| User Control | Explicit state changes | Predictive / Intent-based |

| Interface Type | Visual-first grid | Conversational / Multimodal |

| Feedback | Static toast notifications | Micro-animations / Confidence |

Multimodal and Zero-UI Interfaces

Design is moving off the screen toward “Zero-UI,” which relies on voice, gestures, or environmental cues. Voice-first interfaces have seen a 65% year-on-year growth, becoming the primary input method for a significant segment of mobile users. Multimodal UX allows users to switch seamlessly between typing, speaking, and interacting visually, with the AI maintaining context across all modes.

Security and Ethics: Defending the Intelligent Edge

As AI systems gain autonomy, they introduce unique security challenges that traditional cybersecurity methods cannot address. By 2026, AI security is no longer an afterthought but a core requirement “baked in” from day one.

Prompt Injection and the Lethal Trifecta

Prompt injection is the number one risk for LLM applications, occurring when an attacker tricks the model into ignoring its original instructions. This can lead to “goal hijacking” or “instructional overrides” where the AI becomes a weapon for data exfiltration or system compromise. The “Lethal Trifecta” of 2025—EchoLeak and GeminiJack—demonstrated how agents with web access could be forced to exfiltrate sensitive data via hidden instructions in emails or documents.

Data Leakage and RAG Risks

Retrieval-Augmented Generation (RAG) is transformative for business intelligence but creates a new “compliance nightmare” regarding data leakage. AI outputs can inadvertently reveal sensitive training data, proprietary code, or PII. To mitigate this, organizations must implement differential privacy and strict access controls, ensuring that agents only access appropriate information while respecting security boundaries.

Mitigation and Defense-in-Depth

Mitigating these risks requires moving beyond simple keyword filtering toward comprehensive behavioral awareness. Key strategies include:

- Prompt Hygiene and Containment: Confining what an agent can do and logging all decisions for review.

- Instruction Boundaries: Isolating system instructions from user content to prevent malicious overrides.

- Adversarial Testing (Red Teaming): Systematically attempting to “jailbreak” the model to identify weaknesses before deployment.

- Sandboxing Agents: Isolating AI agents in controlled environments during initial deployment to monitor real-world behavior patterns.

Economic Realities: Costs, Maintenance, and ROI

The development of AI applications in 2026 requires a nuanced understanding of financial modeling. Creating a bespoke AI solution can cost anywhere from $20,000 to over $1,000,000, depending on complexity, data requirements, and the integration approach.

Budget Breakdowns and Cost Drivers

In 2026, the cost of mobile application development has shifted to include cloud software, automation, and long-term maintenance strategies. Data preparation alone accounts for up to 40% of project budgets, while hiring specialized talent AI engineers, data scientists, and UX designers remains a primary expense.

| Project Tier | Estimated Cost (2026) | Typical Timeline | Primary Cost Drivers |

| Basic AI MVP | $15,000 – $50,000 | 2 – 4 Months | Limited features, API integration |

| Business AI App | $50,000 – $150,000 | 4 – 8 Months | Advanced chatbots, predictive analytics |

| Enterprise Platform | $150,000 – $400,000 | 7 – 12 Months | Multi-agent, high security |

| AI Ecosystem | $400,000 – $1M+ | 12 – 24 Months | Autonomous workflows, compliance |

Ongoing Inference and Infrastructure Expenses

Unlike traditional apps, AI-powered systems incur continuous operational costs. Model inference the cost of running queries—is a primary budget line. GPT-4 API usage, for example, can cost between $0.03 and $0.06 per 1,000 tokens, while model inference for high-volume apps can reach $5,000+ per month. Cloud storage for massive datasets can add another $500 to $5,000 monthly.

Calculating and Maximizing ROI

The ROI of AI is realized through Tangible (Hard) and Intangible (Soft) benefits. Hard ROI results from labor cost reductions and revenue growth. For instance, automating IT operations with AI can lead to fewer outages and faster response times, while AI-powered personalization can lift conversion rates by 10% to 20%. Soft ROI includes improved employee morale and better executive decision-making through AI-powered data analytics.

To maximize ROI, teams should:

- Work Iteratively: Introduce AI in small stages to reduce risk and prevent development fatigue.

- Learn from User Data: Adjust roadmaps based on how users actually interact with the AI features rather than attempting to shape behavior artificially.

- Measure Proficiency, Not Just Adoption: High usage rates can mask zero productivity improvement if users lack the skills to extract real value from the tools.

High-Impact Industry Implementations

AI is not a generic tool; its value is deeply tied to industry-specific domain knowledge. The Softix focuses on industries where precision and scalability are paramount, such as healthcare, fintech, and retail.

Healthcare and Predictive Wellness

The AI in healthcare market is projected to reach $208 billion by 2030, with 80% of hospitals already using AI to improve efficiency. Key use cases include:

- AI-Augmented Diagnostics: Using computer vision for medical imaging analysis, which can identify irregular patterns weeks before they become symptomatic.

- Workflow Coordination: Autonomous agents managing hospital discharges, coordinating across care teams, pharmacies, and transport systems.

- Mental Health Support: Online therapy apps and behavioral pattern analysis for predicting mental health episodes.

Fintech and Autonomous Wealth Management

In 2026, 67% of millennials prefer AI-driven financial advice, creating a $1.2 trillion market opportunity. High-impact fintech implementations include:

- Fraud Detection: Real-time monitoring of transaction patterns that flags anomalies with a security layer static systems cannot match.

- Autonomous Financial Planning: Creating personalized investment strategies and automating tax optimization for users.

- KYC and Compliance: Automating regulatory document reviews, reducing due diligence time by 70%.

Retail and the Personal Shopper Revolution

E-commerce is moving beyond basic recommendation engines to create truly immersive experiences.

- Visual Search and Try-On: Using computer vision to let users find products visually or visualize furniture in their homes with 98% accuracy.

- Demand Forecasting: Analyzing foot traffic and POS data to optimize inventory and adjust production schedules against demand shifts.

- Dynamic Pricing: Strategically optimizing prices for both profit and customer satisfaction in real-time.

Future Outlook: The AI Ecosystem of 2026 and Beyond

The converging trends of 2026 point toward a “Super App” ecosystem where multiple functionalities are combined into single, AI-powered environments. Interfaces are becoming “invisible,” as intelligent systems run underneath to remove friction before it appears. For developers and businesses, the framework ecosystem has shifted toward consolidated, enterprise-backed options that offer measurable financial returns rather than mere development convenience.

Success in this landscape requires a validation-first approach: securing commitments from potential users before writing a single prompt. By building for speed and shipping with an MVP that solves one core problem exceptionally well, organizations can minimize the risk of technical failure and maximize the transformative potential of artificial intelligence. For The Softix, this means continuing to deliver precision-engineered solutions that transform the “Think It” phase into a high-performance reality.

As the era of developer-only app creation ends, the focus shifts to strategic curation and human-AI collaboration. Those who view AI not as a feature but as the foundation of the user experience will be the ones to define the next decade of mobile technology. The path forward is one of continuous monitoring, ethical vigilance, and an unwavering focus on delivering value that feels uniquely human-centered in an increasingly automated world. Ready to lead the intelligence revolution? Partner with The Softix to engineer your growth and transform your vision into a high-performance digital reality.