The contemporary digital economy operates at a temporal scale where milliseconds represent the primary boundary between consumer engagement and user abandonment. As the global web transitions into a more decentralized and intelligent framework in 2026, the demand for instantaneous data delivery has transformed from a competitive advantage into a fundamental prerequisite for business survival. For a strategic technology partner like The Softix, understanding the underlying mechanisms of web acceleration is essential for navigating the complexities of modern software development, from initial Minimum Viable Product validation for startups to the modernization of legacy mission-critical systems for large-scale enterprises. The evolution of the web has been characterized by a persistent battle against latency, a struggle that has necessitated a series of profound technological shifts across infrastructure, protocols, and execution models.

The digital landscape of the mid-2020s is defined by a holistic approach to performance, where application design, content delivery infrastructure, and data optimization work in concert to eliminate friction at every layer of the user experience. This paradigm shift moves beyond simple hardware upgrades, favoring instead a sophisticated orchestration of distributed intelligence and algorithmic efficiency. The following analysis explores the ten pivotal technological ideas that have fundamentally accelerated the web, examining their origins, technical mechanisms, and the profound impact they exert on modern business outcomes.

The Physical Layer of Acceleration: The Broadband and 5G Infrastructure Revolution

The narrative of web speed begins with the foundational transition from narrowband dial-up to broadband infrastructure. In the earliest era of the internet, typical connection speeds were limited to 56 Kbps, a constraint that rendered the web a primarily text-based medium where even low-resolution imagery posed a significant performance challenge. The introduction of Digital Subscriber Line (DSL), cable, and fiber-optic networks introduced the concept of “always-on” connectivity, which eliminated the temporal friction of connection establishment and provided the bandwidth necessary for multimedia content and real-time streaming.

In 2026, this physical layer has evolved into the 5G revolution, which provides the ultra-low latency and gigabit-per-second speeds required for a new generation of real-time services. 5G technology fundamentally changes how organizations approach mobile engagement, allowing for high-fidelity video and interactive applications to load without the buffering delays that characterized the 4G era. This infrastructure is particularly critical for the Internet of Things (IoT) and edge-based applications, as it provides a reliable, high-capacity transport layer that can support the density of devices required by smart cities and modern industrial environments.

Evolution of Connectivity and Bandwidth Metrics

| Technology Era | Typical Speed | Primary Technical Impact | Latency Characteristics |

| Dial-Up Internet | Up to 56 Kbps | Basic text browsing; no rich media | Extremely High (>150ms) |

| Broadband (DSL/Cable) | 5 Mbps – 100 Mbps | Enabled multimedia and early video | Moderate (30ms – 60ms) |

| 4G LTE | 10 Mbps – 100 Mbps | Mainstreamed mobile apps and HD streaming | Low (20ms – 40ms) |

| 5G Networks | 1 Gbps+ | Real-time services; ultra-low latency | Ultra-Low (<10ms) |

The transition between these eras reflects a shift in the performance bottleneck. As bandwidth became a commodity, the focus of web acceleration moved from the capacity of the connection to the efficiency with which data is delivered and processed. For enterprises looking to modernize their systems, 5G offers the ability to offload rendering from low-powered client devices, making the digital experience smoother regardless of local hardware constraints.

Decentralized Delivery: The Rise of Content Delivery Networks and Edge Computing

The realization that data is governed by the speed of light led to the development of Content Delivery Networks (CDNs), which represent a move away from the centralized server model toward a geographically distributed network of servers. A CDN works by caching static copies of website assets such as images, CSS files, and JavaScript on multiple servers located in Points of Presence (PoPs) worldwide. When a user in London requests a file from a New York-based origin server, the CDN intercepts the request and serves the file from a local edge node in Paris or Frankfurt, significantly reducing the physical distance the data must travel.

The integration of edge computing has expanded the capabilities of these networks beyond simple caching. While a traditional CDN focuses on storage and delivery, edge computing allows for the execution of application logic and data processing at the network’s periphery. This shift is critical for applications that cannot tolerate the latency of a round-trip to a central data center, such as real-time consultation in telemedicine or instant personalization in e-commerce. By processing data closer to the source, edge computing minimizes lag, conserves bandwidth, and enhances reliability by eliminating single points of failure.

Structural Comparison: CDN vs. Edge Computing Platforms

| Feature | Content Delivery Network (CDN) | Edge Computing |

| Primary Function | Caching and delivering static content | Running application logic and processing data |

| Data Handling | Handles static data that rarely changes | Designed for dynamic, real-time data |

| Architectural Role | A specialized tool for content distribution | A broad architectural model for local compute |

| Security Impact | DDoS mitigation through traffic distribution | Decentralized processing against network attacks |

| Business Value | Reduced latency and server load | Real-time personalization and IoT integration |

The programmable edge allows for sophisticated functions, such as image processing, security validation, and API acceleration, to occur near the user. Platforms like Fastly and Cloudflare have demonstrated significant performance gains for migrating websites, with some organizations seeing a 57% increase in Time-to-First-Byte (TTFB) and a 17% improvement in Largest Contentful Paint (LCP). For a software development agency like The Softix, leveraging edge computing is a standard practice for building scalable, high-performance applications that remain responsive during traffic spikes.

Protocol Evolution: The Transformation from TCP to HTTP/3 and QUIC

The Hypertext Transfer Protocol (HTTP), which governs communication between browsers and servers, has undergone fundamental architectural changes to keep pace with the increasing complexity of web applications. The transition from HTTP/1.1 to HTTP/2 introduced multiplexing, allowing multiple requests and responses to be sent simultaneously over a single Transmission Control Protocol (TCP) connection. While this improved efficiency, HTTP/2 remained susceptible to head-of-line blocking, where the loss of a single packet could stall an entire connection.

HTTP/3 solves this by replacing TCP with QUIC (Quick UDP Internet Connections), a transport protocol built on top of the User Datagram Protocol (UDP). QUIC combines the features of TCP such as reliability and congestion control with the speed and flexibility of UDP, enabling connections to be established up to 33% faster than HTTP/2. By integrating security features like TLS 1.3 from the start, QUIC eliminates the need for a separate handshake, reducing connection setup times and improving performance on unstable networks like mobile 5G or high-latency rural connections.

Performance Metrics across HTTP Protocol Versions

| Protocol Version | Transport | Connection Setup (ms) | Head-of-Line Blocking |

| HTTP/1.1 | TCP | 50 – 120 ms | Yes (Significant) |

| HTTP/2 | TCP | 40 – 100 ms | Yes (TCP-level) |

| HTTP/3 (QUIC) | UDP | 20 – 50 ms | No (Stream-level) |

One of the most impactful features of HTTP/3 is connection migration, which allows a user to move between networks such as switching from a home Wi-Fi to a mobile cellular connection without interrupting active data streams. This resilience is vital for mobile users who expect a seamless experience. In real-world benchmarks, HTTP/3 users have shown a 13.8% improvement in LCP scores compared to those on HTTP/2, a gain that directly translates to better Core Web Vitals and search engine rankings.

Payload Optimization: The Strategic Use of Brotli and Advanced Compression

As web pages become more resource-heavy, the efficiency of data compression has become a critical factor in performance. While Gzip has been the industry standard for decades, utilizing the DEFLATE algorithm, the emergence of Brotli developed by Google has introduced a more efficient alternative for text-based assets like HTML, CSS, and JavaScript. Brotli utilizes a 2nd-order context modeling approach and a pre-defined static dictionary of common web words, allowing it to compress files up to 20-30% more effectively than Gzip.

This reduction in file size is particularly effective for highly repetitive syntax found in modern JavaScript bundles and CSS frameworks. For instance, a 500KB JavaScript bundle can be reduced to 112KB using Brotli, compared to 135KB with Gzip, a 17% saving that results in fewer TCP packets and faster download times. While Brotli requires more CPU power during the compression phase, its decompression speed on the user’s browser is exceptionally fast, ensuring that the performance benefits are not offset by processing delays.

Compression Efficiency: Brotli vs. Gzip for Web Assets

| Asset Type | Gzip Size (Typical) | Brotli Size (Typical) | Percentage Improvement |

| JavaScript Bundle | 135 KB | 112 KB | 17% |

| CSS Stylesheet | 45 KB | 32 KB | 28.8% |

| HTML Document | 22 KB | 17 KB | 22.7% |

| Overall Saving | Standard | Enhanced | 15% – 30% |

The strategic application of Brotli is especially beneficial for content-heavy APIs and websites served to mobile users on high-latency connections. By delivering smaller payloads, organizations reduce total bandwidth throughput and infrastructure costs while simultaneously improving the perceived load time for their users.

For a software agency like The Softix, implementing Brotli on high-performance servers is an essential step in ensuring that their clients’ WordPress sites and web applications load at lightning speed.

Architectural Flexibility: The Evolution of Web Rendering Strategies

The method by which a web application generates and delivers its content known as its rendering strategy has a profound impact on speed, SEO, and the user experience. Historically, Server-Side Rendering (SSR) was the standard, where the server generates the full HTML for each request. While SSR provides fast initial load times and is highly SEO-friendly, it can be resource-intensive for the server and result in slower subsequent navigation. Client-Side Rendering (CSR), which builds the interface in the browser using JavaScript, offers rich interactions but often suffers from slow initial loads as the browser must wait for scripts to download and execute.

To address these limitations, modern frameworks have introduced Static Site Generation (SSG) and Hybrid rendering models. SSG builds pages at build time, allowing them to be served as static files via a CDN, which results in the fastest possible First Contentful Paint (FCP). Hybrid models, utilized by platforms like Next.js, allow developers to combine these strategies, using SSG for stable marketing pages, SSR for dynamic product listings, and CSR for highly interactive components.

Comparison of Rendering Metrics and Business Impact

| Metric | Server-Side (SSR) | Client-Side (CSR) | Static Generation (SSG) |

| First Contentful Paint | Fast | Slow (Blank screen) | Fastest (Instant) |

| Time to Interactive | Delayed (Hydration) | Immediate after load | Excellent |

| SEO Performance | Strong | Weak (JS dependent) | Strong |

| Server Resource Use | High | Low | Minimal |

| Scalability | Limited | Good | Excellent |

For businesses that rely on organic traffic, SSR or SSG are essential to ensure that search engines can crawl and index content immediately. In contrast, CSR is better suited for authenticated user dashboards where SEO is not a priority. The ability to selectively update static content at runtime through techniques like Incremental Static Regeneration (ISR) has further narrowed the gap between performance and data freshness, allowing for highly scalable, up-to-date web experiences.

Native Execution in the Browser: The Impact of WebAssembly

WebAssembly (Wasm) represents one of the most significant performance breakthroughs in web history, providing a low-level, assembly-like language with a compact binary format that runs at near-native speeds in the browser. Wasm addresses the performance bottlenecks inherent in JavaScript, which must be parsed and JIT-compiled by the browser engine a process that can be prohibitive for computationally intensive tasks. By compiling high-performance languages like C++, Rust, and Go into Wasm modules, developers can execute complex logic far faster than traditional scripting allows.

Real-world benchmarks indicate that WebAssembly consistently outperforms JavaScript in CPU-bound tasks, showing speed improvements of 2x to 6x for image processing, machine learning models, and numerical simulations. Because Wasm works alongside JavaScript, developers can maintain the flexibility of JS for the user interface while offloading performance-critical tasks to the Wasm sandbox. This is particularly valuable for modern web applications that involve 3D gaming, computer vision, or real-time video editing.

WebAssembly vs. JavaScript Performance Benchmark

| Task Description | JavaScript (JIT) Speed | WebAssembly Speed | Improvement Factor |

| Image Processing Filter | 100 ms | 25 ms | 4x Faster |

| 4K Video Transcoding | Inefficient | Optimized | 6x+ Faster |

| Recursive Computation | 500 ms | 150 ms | ~3.3x Faster |

| 1 Million Row CSV Parse | Slow (UI Freeze) | Near-Native | Significant |

The ability to port existing high-performance libraries directly to the web using tools like Emscripten has allowed mature software suites to migrate to the browser without being rewritten in JavaScript. This has expanded the boundaries of what is possible on the web, enabling a level of sophisticated computing that was previously reserved for native desktop applications. For a specialized agency like The Softix, integrating WebAssembly is a strategic choice when building mission-critical enterprise systems that require heavy data processing.

Network Resilience and Offline Capability: PWAs and Service Workers

Progressive Web Applications (PWAs) have bridged the gap between the web and native mobile apps by providing a faster, more resilient user experience that works across all devices. The core of PWA performance is the Service Worker a JavaScript file that runs in the background and handles network interception and caching. By caching essential UI components and data, Service Workers allow websites to load instantly on repeat visits and even function in offline or low-connectivity environments.

The business impact of PWAs is demonstrated through numerous case studies from leading tech companies. Twitter Lite, for example, saw a 65% increase in pages per session and a 75% reduction in data consumption by leveraging Service Worker caching. Similarly, Pinterest’s mobile web rewrite led to a 40% increase in time spent on the site and a 44% boost in ad revenue, as their time-to-interactive dropped from 23 seconds to just 3.9 seconds.

Business Success Metrics for Progressive Web Apps

| Organization | Implementation Impact | User Engagement Change | Conversion Impact |

| Twitter Lite | 30% faster launch time | 65% increase in session depth | High |

| TTI improved by 83% | 60% higher core engagements | 44% Revenue Increase | |

| Starbucks | 99% smaller than native app | Significant order increase | Improved retention |

| Tinder | Load time: 11.9s to 4.6s | Increased swiping activity | Higher matches |

| MakeMyTrip | 38% faster page load | Triple conversion rate | 160% higher retention |

By minimizing data usage and optimizing the initial load through techniques like the PRPL pattern (Push, Render, Pre-cache, Lazy-load), PWAs ensure that users in regions with spotty internet connectivity can still access services reliably. This accessibility is a critical factor for global organizations seeking to expand their user base in emerging markets. For the client-focused strategy at The Softix, building PWAs ensures that applications are “future-ready” and capable of providing a seamless experience regardless of network stability.

Intelligent Anticipation: 103 Early Hints and the Speculation Rules API

One of the most persistent delays in web performance is the “server think-time” the period when the browser connection is idle while the server is processing a request. The 103 Early Hints status code addresses this by allowing the server to send preliminary HTTP headers to the browser before the final response is ready. These hints inform the browser which critical resources (e.g., stylesheets, web fonts, or scripts) it should begin preloading or which domains it should preconnect to while the server finishes its work.

The Speculation Rules API takes this proactive approach further by allowing developers to define rules for prefetching or prerendering pages before a user even clicks a link. By anticipating user behavior such as hovering over a link or moving the cursor toward a button the browser can download the HTML for the next page or even fully render it in an invisible background tab. When the user eventually navigates, the transition appears almost instant, as the page is already prepared in the browser’s memory.

Perceived Performance Gains: Speculation Rules

| Action Type | Perceived Load Impact | Mechanism | User Experience |

| No Speculation | Baseline (10,644 ms) | Standard fetch on click | Traditional waiting |

| Prefetch | 23% Faster (8,195 ms) | Fetches HTML in advance | Reduced wait |

| Prerender | 47% Faster (5,679 ms) | Fully renders in background | Near-instant |

These technologies are particularly effective for e-commerce sites and news platforms where users follow predictable navigation patterns. By utilizing “eagerness” settings, developers can balance the performance benefits against resource consumption, ensuring that prerendering only occurs when there is a high probability of navigation. For high-traffic sites managed by The Softix, implementing these predictive loading techniques is a key strategy for improving Core Web Vitals like Largest Contentful Paint (LCP) and enhancing user retention.

Visual Performance: The Shift to WebP and AVIF Image Formats

Images typically account for the largest portion of a web page’s total weight, making them a primary target for optimization. The transition from JPEG and PNG to modern formats like WebP and AVIF has introduced a new era of visual efficiency. WebP, developed by Google, offers a 25-35% reduction in file size compared to JPEG while maintaining comparable quality, and it supports both transparency and animation making it a versatile replacement for older formats.

AVIF (AV1 Image File Format) is the next evolution, built on the AV1 video codec to achieve up to 50% better compression than JPEG. While AVIF is more computationally intensive to decode than WebP, it provides superior image quality for photographic content and better support for high dynamic range (HDR) and wide color gamuts. The use of these formats directly impacts loading speeds and SEO, as smaller image sizes mean fewer HTTP requests and less bandwidth consumption for mobile users.

Image Format Comparison: Weight and Quality

| Format | Compression Level | Transparency | Best Use Case | Decoding Complexity |

| JPEG | Low (Lossy) | No | Legacy support | Easy (Low CPU) |

| WebP | Medium-High | Yes | Web default; illustrations | Moderate |

| AVIF | Very High | Yes | Photos; HDR; banners | High (Heavy CPU) |

Organizations like YouTube have found that switching to WebP thumbnails resulted in 10% faster page loads. In 2026, the standard practice is to use a hybrid approach: serving AVIF as the primary image source for modern browsers while falling back to WebP or JPEG for older clients. This ensures that every user receives the most optimized version of the site’s visuals, balancing quality with speed.

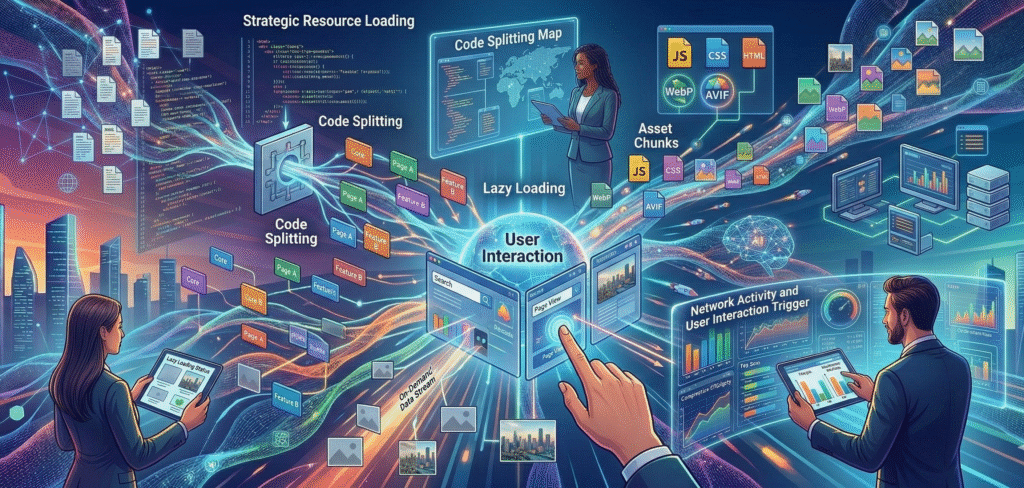

Strategic Resource Loading: Lazy Loading and Code Splitting

Optimizing how and when resources are loaded is as important as optimizing the resources themselves. Lazy loading is a technique that delays the loading of non-critical assets such as off-screen images or background videos until they are actually needed as the user scrolls. This reduces the initial page load time and bandwidth consumption, particularly on lengthy, image-rich pages. However, it must be used carefully; lazy loading “above-the-fold” content can actually hurt performance by delaying the Largest Contentful Paint (LCP).

Code splitting complements this by breaking down large JavaScript bundles into smaller, manageable chunks that are delivered on demand. By delivering only the code necessary for the current view, developers can significantly reduce the Time to Interactive (TTI) and the overhead of JavaScript execution. Studies have shown that combining lazy loading and code splitting can achieve up to a 40% reduction in page load times, enhancing the overall responsiveness of modern web applications.

Impact of Resource Optimization Techniques

| Technique | Primary Benefit | Potential Trade-off | Performance Metric Impact |

| Lazy Loading | Reduces initial bytes loaded | Can delay LCP if overused | Improved FCP; lower bandwidth |

| Code Splitting | Decreases TTI and JS overhead | Increases request complexity | Significant TTI reduction |

| Preloading | Prioritizes critical assets | Can block other resources | Improved LCP and FCP |

| Predictive Prefetch | Near-instant navigation | May waste user data/battery | Improved Perceived Load |

For a strategic partner like The Softix, implementing these techniques is a standard part of their performance-first development workflow. By using tools like Lighthouse for regular audits, they can identify non-blocking resources for lazy loading and optimize the “critical path” for rendering. This data-driven approach ensures that the most important content is always visible to the user as quickly as possible.

The Business ROI of Speed: Benchmarks and Metrics in 2026

In 2026, web speed is no longer just a technical metric; it is a fundamental driver of profitability. The correlation between performance and business outcomes is stark: as page load time increases, bounce rates rise, and conversion rates plummet. In the e-commerce sector, Customer Acquisition Costs (CAC) have surged by 40% in just two years, making it imperative to maximize the conversion potential of every visitor. Organizations are increasingly moving away from traditional daily Return on Ad Spend (ROAS) in favor of the LTV:CAC ratio the Lifetime Value of a customer compared to their acquisition cost—as the ultimate indicator of business stability.

A healthy LTV:CAC ratio is widely considered to be 3:1 or higher. Achieving this requires a high-performance web experience that fosters the emotional loyalty and repeat purchase behavior necessary for long-term profitability. Speed at the checkout flow is particularly critical, as high cart abandonment rates often a result of friction and slow loading represent a significant loss of potential revenue.

Industry Benchmarks for E-commerce Performance (2026)

| Vertical | Avg. Conversion Rate | CAC Median | ROAS (Paid Search) |

| Food & Beverage | 6.02% | $156 (Global) | 4x – 6x |

| Beauty & Personal Care | 4.89% | $116 (B2C CPL) | 4x – 6x |

| B2B Ecommerce | 5:1 (ROAS) | $148 (B2B CPL) | 5x – 7x |

| Luxury & Jewelry | 0.95% | High | 6x+ |

The strategic imperative for 2026 is building mechanisms that convert “trend shoppers” into “true loyalists”. This transition is powered by speed, personalization, and seamless interactions all of which are made possible by the technologies discussed in this report. For a startup or growing business working with The Softix, prioritizing these performance metrics is not just about a faster site; it’s about building a sustainable, profitable digital enterprise.

The Future Frontier: AI and the Distributed Edge (2026–2027)

Looking forward, the evolution of web acceleration will be increasingly driven by the integration of Artificial Intelligence at the network’s edge. In 2026, the tech industry is shifting from massive, centralized Large Language Models (LLMs) to smaller, task-specific Small Language Models (SLMs) that can run directly on edge devices or in decentralized environments. This “Edge AI” transition prioritizes efficiency and real-time processing, enabling websites to interprets and respond to user behavior with new levels of precision without the latency of cloud-based analysis.

This move toward automation and distributed intelligence will revolutionize how websites are built and maintained. AI-driven tools will soon handle repetitive tasks such as debugging, testing, and even real-time layout adjustments based on individual user behavior. For organizations to stay competitive, they must adapt to these “cloud-native” and “edge-resident” architectures, ensuring that their systems are not only fast today but capable of supporting the autonomous, agentic experiences of tomorrow. The partnership with an innovative firm like The Softix, which focuses on modernizing systems and pioneering trust through technology, is essential for businesses navigating this transformative phase of internet history.