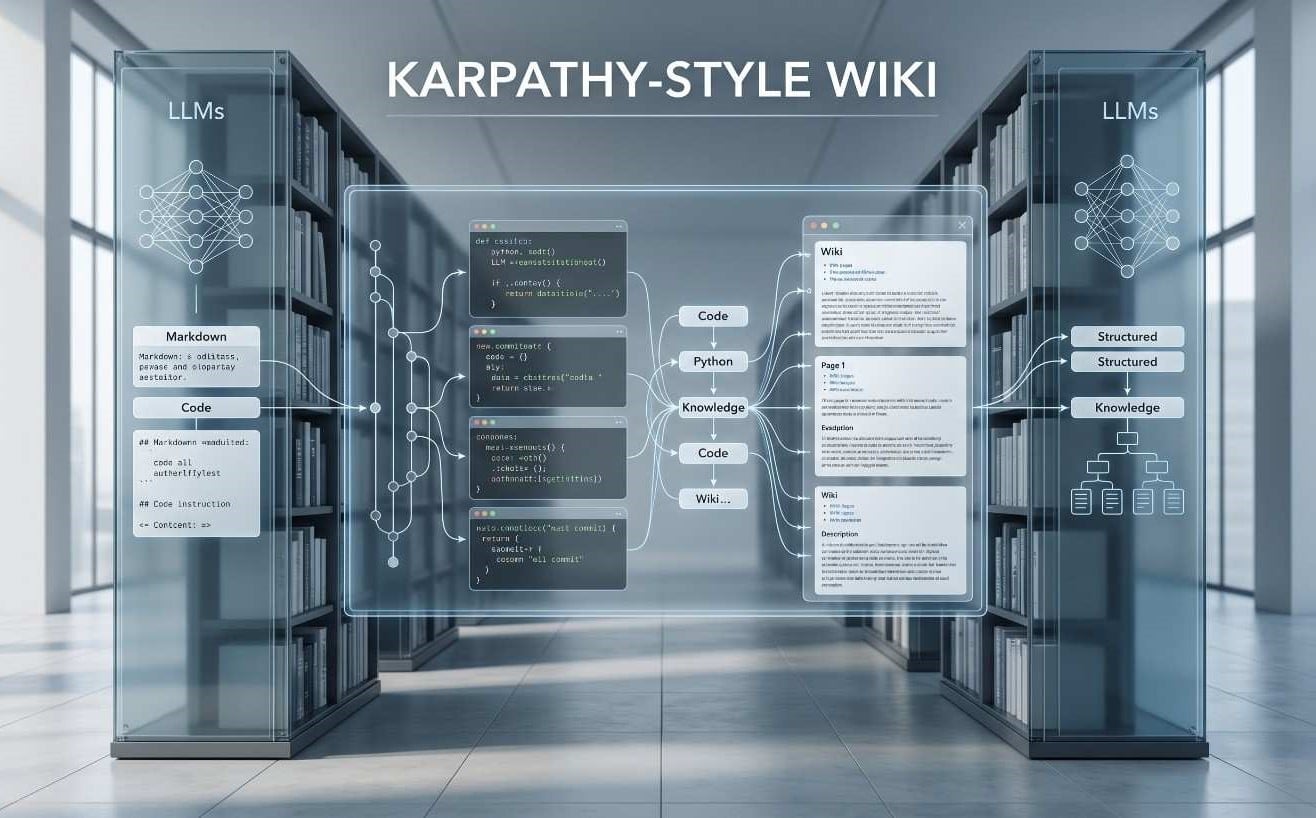

The evolution of personal and organizational knowledge management has reached a critical juncture where the traditional methods of information retrieval are increasingly insufficient for the demands of the modern cognitive workload. In April 2026, Andrej Karpathy, a seminal figure in the field of artificial intelligence and a founding member of OpenAI, introduced a methodology that deviates fundamentally from the established norms of Retrieval-Augmented Generation (RAG). This approach, colloquially referred to as the LLM Wiki, posits that the primary utility of large language models lies not in their ability to search through vast, unstructured datasets at the moment of a query, but in their capacity to act as compilers of knowledge. By treating raw information research papers, technical documentation, and personal notes as source code that needs to be compiled into a persistent, interlinked markdown-based substrate, the LLM Wiki pattern enables a stateful form of intelligence where insights compound over time. This system addresses the inherent “amnesia” of standard AI interactions, where information is rediscovered from scratch during every session, by creating a human-readable and version-controlled knowledge graph maintained by an artificial intelligence agent.

The Theoretical Foundation of Compilation-Based Knowledge Systems

The conceptual framework of the LLM Wiki rests on the observation that traditional RAG systems carry a significant “Context Debt”. In a standard RAG pipeline, documents are chunked into smaller fragments, converted into vector embeddings, and stored in a database; when a user asks a question, the system retrieves semantically similar chunks to provide an answer. While functional, this process is essentially a sophisticated form of search that lacks a synthesis layer. The system fails to build a cohesive mental model of the subject matter, and any cross-references or contradictions between different documents are often missed because the model only sees isolated fragments. Karpathy’s proposal flips this workflow by requiring the AI to perform the synthesis upfront, during the ingestion phase, creating a structured wiki that sits between the raw sources and the user. This allows the agent to recognize how new data enriches or challenges existing knowledge, moving the paradigm from stateless retrieval to a cumulative understanding maintained by machines but guided by humans.

The technical efficiency of this model is significant, with reports indicating it can be up to 70 times more efficient than traditional RAG for agent-accessible knowledge bases. This efficiency stems from the fact that the agent reads a compact, well-organized markdown document rather than hunting through millions of vector-indexed fragments. By reducing the signal-to-noise ratio, the LLM Wiki allows for deeper reasoning over a bounded, curated corpus, making it ideal for intensive research projects, complex project onboarding, and the management of internal team expertise. The use of Markdown as the primary storage format is strategic; it is human-readable, machine-parseable, and natively compatible with version control systems like Git, ensuring that the knowledge substrate remains portable and auditable over the long term.

| Feature | Retrieval-Augmented Generation (RAG) | Karpathy-Style LLM Wiki |

| Primary Process | Indexing and semantic retrieval | Upfront compilation and synthesis |

| Knowledge State | Stateless (re-derived per query) | Stateful (compounds over time) |

| Storage Infrastructure | Vector databases and embedding pipelines | Plain text Markdown files and Git |

| Human Interface | Chat-based ephemeral interaction | Persistent, navigable wiki (e.g., Obsidian) |

| Synthesis Depth | Limited to retrieved chunks | Deep cross-references across the corpus |

| Auditability | Difficult to verify source retrieval | High through file diffs and Git history |

Professional Implementation of Agentic Systems into Service Portfolios

The shift toward agentic knowledge management is not merely a personal productivity trend but a strategic advancement for modern digital agencies. For a USA-headquartered organization like The Softix, which focuses on providing enterprise-grade IT solutions and custom software, the ability to manage complex project documentation through an LLM Wiki represents a methodological leap in client service. By integrating these systems, agencies providing custom wordpress development services can ensure that every technical decision, API integration, and architectural choice is captured in a self-healing knowledge substrate that remains aligned with the client’s strategic goals. This approach moves beyond traditional “one-size-fits-all” documentation, allowing for the creation of project-specific wikis that grow more sophisticated as the development lifecycle progresses, ultimately providing a higher level of precision and reliability for startups and growing enterprises alike.

Within a professional development environment, the LLM Wiki acts as the “operating manual” for the project, where the AI agent is tasked with the tedious bookkeeping that human developers often overlook. This includes maintaining a chronological log of changes, updating an index of all technical entities, and flagging contradictions between different versions of a product specification. When a team utilizes an agent like Claude Code or Cursor, the CLAUDE.md or AGENTS.md schema file acts as the contract between the developers and the AI, defining the strict conventions that must be followed during the ingestion of new sources. This discipline ensures that the knowledge base remains a high-fidelity representation of the current state of the software, facilitating faster onboarding for new team members and reducing the “Context Debt” associated with legacy codebases.

| Softix Service Category | Core Development Focus | Knowledge Substrate Utility |

| Web App Development | Enterprise-grade customized solutions | Centralized documentation of business logic |

| Mobile App Development | Native and cross-platform solutions | Interlinked specs for iOS and Android parity |

| CRM Development | Custom business growth tools | Persistent memory of client relationship data |

| SaaS Development | Scalable Software as a Service | Versioned mapping of multi-tenant architectures |

| WordPress Customization | Premium tailored functionality | Structured record of plugin and theme logic |

Scaling Knowledge Substrates for Enterprise Efficiency

The evolution of digital infrastructure is increasingly favoring systems that are optimized for machine consumption as much as human browsing. The field of wordpress website development has reached a point where the content itself must be prepared for agentic browsing and generative search engines. By adopting a markdown-first, Git-backed architecture, enterprises can eliminate the “database bloat” inherent in traditional CMS platforms and maximize the extractability of their data for AI crawlers. This is particularly relevant for the service offerings of The Softix, which target a wide range of industries including fintech, healthcare, and law firms, where the accuracy and accessibility of technical and policy information are paramount. Storing content in a version-controlled markdown wiki allows these organizations to bridge the gap between their marketing content and engineering documentation, ensuring that every piece of information is cited, auditable, and ready for ingestion by both internal AI agents and external answer engines.

A critical component of this scalability is the use of automated workflows, such as GitHub Actions, to maintain the wiki. In an enterprise setting, an agentic workflow can fetch recent updates from a changelog, compare them against existing documentation, and autonomously open a Pull Request to resolve any discrepancies. This “Continuous Documentation” model ensures that the knowledge base remains current without requiring constant manual intervention from the development team. Furthermore, systems like Cloudflare’s “Markdown for Agents” enable the automatic conversion of HTML pages into markdown when requested by AI agents, which can reduce token usage by up to 80% and significantly improve the performance of LLMs in reasoning tasks. This technical alignment ensures that as a business grows, its knowledge substrate remains a high-performance asset that supports informed decision-making and efficient technical operations.

| Scalability Metric | Traditional Documentation | Agentic Wiki (Git-Based) |

| Maintenance Cost | Increases exponentially with size | Stabilized through AI automation |

| Search Precision | Keyword/semantic search prone to noise | Intent-driven retrieval via structured index |

| Token Efficiency | High consumption (unstructured HTML) | Low consumption (curated Markdown) |

| Update Velocity | Manual and prone to lag | Real-time via Git hooks and CI/CD |

| Collaboration | Fragmented across tools | Unified via Pull Request (PR) workflow |

The Three-Layer Architecture: An Engineering Blueprint

The structural integrity of a Karpathy-style wiki depends on a rigid three-layer architecture that separates raw input from synthesized output and governing logic. The first layer, the raw/ directory, serves as the immutable source of truth. This folder contains the unedited source material research papers, meeting transcripts, code repositories, and web clippings that the AI agent uses as the verification baseline for all claims. It is a deliberate design choice that these files are never modified by the agent; if the synthesis logic evolves or a new model is introduced, the entire wiki can be re-compiled from these original sources without loss of fidelity. Professionals often use tools like the Obsidian Web Clipper to populate this layer, converting live web content into clean markdown and downloading associated images to local disk to ensure that the AI can reference visual context even if external URLs break.

The second layer is the wiki/ directory, which is the domain where the LLM performs its synthesis and maintenance work. This directory is typically organized by content type, including sub-folders for concepts/, entities/, sources/ (which house individual summaries), and comparisons/. Two core files govern this layer: index.md, a content-oriented catalog that the agent reads first to navigate the wiki, and log.md, an append-only operation log that tracks every ingestion and update with high-fidelity timestamps. This layer is designed to be human-readable but AI-maintained, providing a persistent knowledge substrate that is significantly easier for an LLM to reason over than a collection of raw, disconnected documents.

The third layer is the CLAUDE.md (or AGENTS.md) file, which serves as the “brain” or the instruction set that turns a generic AI into a disciplined wiki curator. This configuration file defines the naming conventions, page templates, and strict operational workflows that the agent must follow. It mandates that every factual claim in the wiki must link back to a specific source in the raw/ directory using standard [[wikilink]] syntax, ensuring that the entire knowledge graph remains grounded in evidence. This schema is often co-evolved by the user and the agent over time, allowing the system to adapt to the specific requirements of the domain, whether it be technical engineering, legal research, or product management.

| Layer Type | Directory/File | Primary Responsibility | Ownership |

| Raw Source | raw/ | Immutable ground truth (PDFs, notes, data) | Human Curator |

| Knowledge Base | wiki/ | Synthesized concept and entity pages | AI Agent |

| Navigation | wiki/index.md | Master catalog and routing guide | AI Agent |

| Audit Log | wiki/log.md | Append-only history of operations | AI Agent |

| Schema/Config | CLAUDE.md | Rules, workflows, and page templates | Co-evolved |

The Technical Execution of Knowledge Ingestion and Synthesis

The process of populating an LLM Wiki is defined by a 9-step ingestion workflow that prioritizes discussion and synthesis over simple data transfer. When a human curator drops a new document into the raw/ folder, the AI agent does not just index it for later retrieval; instead, it reads the entire source and engages in a brief discussion with the user about the key takeaways. Once the primary insights are agreed upon, the agent creates a source summary page and proceeds to “cascade” updates across the wiki. A single source might impact 10 to 15 different pages, as the agent identifies new concepts, enriches existing entity profiles, and updates comparison tables. This compounding property ensures that with every source added, the knowledge graph becomes denser and more interlinked, rather than simply larger.

This stateful nature of the LLM Wiki allows for complex, multi-step queries that traditional RAG systems struggle to handle. Because the synthesis has already been performed during the ingestion phase, the agent can answer high-level questions by reading the relevant concept pages and the master index. A significant innovation of this pattern is the ability to “file back” valuable answers into the wiki as permanent analysis pages. If an agent performs a deep comparison of three different research papers at the user’s request, that analysis does not disappear into a chat history; it becomes a new node in the knowledge graph, ensuring that the results of human-AI exploration are preserved for future use.

| Workflow Stage | Step Number | Agent Operation | Outcome |

| Discovery | 1 | Read full source from raw/ | Initial comprehension |

| Discussion | 2 | Outline key takeaways to human | User alignment |

| Summarization | 3 | Create source page in wiki/sources/ | Immutable reference |

| Extraction | 4 | Identify new concepts/entities | Content expansion |

| Update | 5 | Enrich existing pages with new data | Knowledge compounding |

| Interlinking | 6 | Add [[wikilinks]] between pages | Graph connectivity |

| Indexing | 7 | Update wiki/index.md catalog | Navigation health |

| Auditing | 8 | Log session details in wiki/log.md | Traceability |

| Visualization | 9 | Update Obsidian graph view | Visual context |

The Self-Healing Mechanism: Maintenance and Linting

A human-maintained wiki inevitably fails because the burden of “bookkeeping” updating cross-references, managing tags, and resolving contradictions grows faster than the value of the knowledge contained. The Karpathy-style wiki solves this by assigning these tedious tasks to the AI agent through periodic “health checks” or linting workflows. The agent scans the entire wiki/ directory to identify structural and logical issues that a human would likely miss. This includes finding “orphan pages” (files with no incoming links), identifying broken wikilinks, and flagging claims that have been superseded or contradicted by newer source material. This self-healing process ensures that the knowledge substrate remains coherent and reliable, even as it grows to hundreds of pages.

The technical parameters for these checks are defined in the CLAUDE.md schema, ensuring that the linting is systematic rather than ad-hoc. The agent can even suggest new questions to investigate or identify missing concepts that are frequently mentioned but lack their own dedicated page. In some advanced configurations, these health checks are automated via cron-driven GitHub Actions, providing a “CI/CD for Knowledge” where the agent acts as a diligent archivist that works overnight to tidy the facts and surface inconsistencies for human review. This level of maintenance transforms the knowledge base from a static archive into a living, evolving organism that actively supports the researcher’s intellectual growth.

| Linting Target | Technical Check | Proposed Fix |

| Contradictions | Factual discrepancies between pages | Flag with source quotes for human review |

| Orphan Pages | Pages with no inbound links | Suggest integration into index or existing pages |

| Missing Concepts | Referenced terms without a page | Create new draft page based on existing context |

| Stale Claims | Data superseded by newer sources | Flag for update or mark as deprecated |

| Broken Links | Internal [[links]] pointing to non-files | Remove link or rename target for consistency |

| Format Integrity | Deviation from mandatory templates | Re-format page to adhere to CLAUDE.md schema |

Visual Intelligence: Diagrams-as-Code with Mermaid.js

One of the most innovative extensions of the Karpathy pattern is the integration of visual modeling through “Diagrams-as-Code”. Mermaid.js is a text-based diagramming tool that allows users to generate flowcharts, sequence diagrams, and system architectures using markdown-inspired syntax. Because the diagram structure is stored as plain text within markdown files, it is perfectly suited for AI agent manipulation. An agent can be tasked with “diagramming the end-to-end flow of our payment process” or “creating a sequence diagram of the user login sequence,” and it will produce the Mermaid markup that renders instantly in the Obsidian front-end. This eliminates the need for cumbersome drag-and-drop tools and ensures that visual documentation remains as current as the text itself.

This capability significantly speeds up the design phase of technical projects, as developers can rapidly iterate on architecture ideas by simply prompting the AI to “add a database fallback to this flow” or “include an error path for invalid credentials”. For agents operating inside a wiki, Mermaid provides a way to visualize the decision-making loops and tool orchestrations they perform, making their autonomous actions transparent to the human curator. Advanced agents can even perform “Image-to-Graph” operations, where they analyze a photo of a whiteboard drawing and reverse-engineer it into Mermaid code, effectively digitizing analog brainstorming sessions into the version-controlled knowledge substrate.

| Diagram Category | Use Case in LLM Wiki | Visualization Mechanism |

| Flowchart | Mapping decision trees and agentic logic | Nodes, diamonds (decisions), and arrows |

| Sequence Diagram | Illustrating multi-component interactions | Vertical lifelines and horizontal message arrows |

| Gantt Chart | Tracking project milestones and task duration | Time-scaled bars with dependency markers |

| Entity-Relationship | Modeling database schemas and concept ties | Boxes with primary/foreign key attributes |

| State Diagram | Visualizing complex system states/transitions | Circles (states) and transition arrows |

The Human-in-the-Loop: Curating the Agentic Workforce

While the AI agent handles the heavy lifting of summarization and interlinking, the human curator remains the final authority on the “ground truth” and the strategic direction of the wiki. Karpathy’s methodology emphasizes a specific division of labor: the human curates high-signal sources and asks deep questions, while the LLM manages the bookkeeping and maintains the knowledge graph. This collaborative model is particularly evident during the ingestion phase, where the agent discusses its findings with the user before committing them to the permanent wiki; this ensures that the synthesis aligns with the human’s judgment and expertise. The human is also responsible for co-evolving the CLAUDE.md schema, adjusting the rules and templates as the project’s needs become more refined.

This partnership is facilitated by the “IDE” of the system, typically Obsidian, which provides the visual feedback needed for the human to monitor the agent’s work. Through the Graph View, a researcher can see new connections emerging in real-time, identifying “hubs” of high-density knowledge or “orphaned” areas that require more research. If the agent proposes an analysis that is particularly insightful, the human can choose to “promote” it from a temporary chat response to a permanent wiki page, ensuring that the knowledge compounds across sessions. This synergy between human taste and machine-scale memory allows for a form of “slow, careful, cumulative understanding” that is often lost in the fast-paced, ephemeral nature of standard AI interactions.

| Component | Responsibility | Primary Value Provided |

| Human Curator | Source selection and strategic questioning | Judgment, taste, and domain expertise |

| AI Agent | Summarization, interlinking, and bookkeeping | Scalable memory and tireless maintenance |

| Obsidian | Visual interface and graph rendering | Cognitive offloading and visual feedback |

| Git/GitHub | Versioning and collaborative review | Trust, auditability, and safety |

| Markdown | Universal data representation | Portability and LLM native extractability |

Infrastructure and Tooling: Local First vs. Enterprise Cloud

The Karpathy pattern is inherently flexible, allowing it to be deployed as either a “personal productivity hack” or a “viable enterprise architecture”. For personal use, the stack is typically local-first, utilizing tools like Obsidian and Ollama to run models on private hardware. This setup prioritizes privacy and security, as no data ever leaves the user’s network. Power users may choose different hardware tiers depending on their needs, ranging from 16GB RAM for entry-tier models (like Llama 3.3:8b) to high-performance GPUs for “power tier” reasoning models. This local setup ensures zero latency and absolute control over the knowledge substrate, which remains “cat-able” and “git clone-able” at all times.

At the enterprise level, the pattern scales into a “team knowledge substrate” hosted in Git repositories and managed via shared AI agents like Claude Code or team-wide Cursor configurations. In this environment, the “Docs-as-Code” methodology allows documentation to live alongside the application code, ensuring that developers and technical writers can collaborate using the same Pull Request (PR) and code review processes. Organizations can implement a multi-agent orchestration layer where specialized agents handle different parts of the wiki lifecycle from “Pam the Archivist” who tidies the facts to a senior “Reviewer Agent” that approves drafts before they are promoted to the canonical team wiki. This shift toward persistent, shared memory systems transforms a company’s fragmented documentation into a “digital assembly line” that accelerates delivery and reduces technical debt.

| Tier | Infrastructure | Typical LLM | Use Case |

| Entry Tier | 16 GB RAM (No GPU) | Llama 3.3 (8b) | Personal notes and simple wikis |

| Mid Tier | 32 GB RAM / 12 GB VRAM | Qwen 2.5 (14b) | Research projects and technical docs |

| Power Tier | 24+ GB VRAM | Llama 4 Scout / GPT-4o | Enterprise scale and deep synthesis |

| Team/Cloud | GitHub / Cloud Runners | Claude 3.5 Sonnet | Collaborative codebases and wikis |

The Agentic Web: Markdown as the Lingua Franca

The transition toward an “agentic web” is being driven by the need for structured, machine-friendly representations of data that reduce the computational overhead of processing traditional HTML. Markdown has emerged as the “lingua franca” for this new era, as its explicit structure results in better AI reasoning and minimal token waste. Cloudflare’s “Markdown for Agents” feature is a pioneering implementation of this trend, allowing websites to serve a streamlined markdown variant of their pages to AI crawlers through standard HTTP content negotiation. By sending an Accept: text/markdown header, an AI agent can bypass the “noise” of div wrappers, scripts, and styling, receiving instead a pure semantic representation of the content.

This move toward machine-readable variants is not just about efficiency but about visibility. In a future where users interact with “answer engines” like ChatGPT Search or Perplexity, the goal for any organization—including digital agencies like The Softix is to ensure their content is correctly understood and cited. Generative Engine Optimization (GEO) involves preparing content in high-signal formats like markdown, ensuring that heading hierarchies, ordered lists, and blockquotes are clearly defined. The LLM Wiki pattern is the natural internal extension of this external trend; by maintaining an internal markdown-based knowledge graph, a company ensures that its most valuable intellectual property is natively optimized for the age of AI.

| GEO Strategy | Technical Implementation | AI Reasoning Benefit |

| Semantic Hierarchy | Strict use of Markdown headers (H1-H6) | Identifies core arguments/entities |

| Token Shrinkage | HTML-to-Markdown conversion | 80% reduction in processing cost |

| Structured Metadata | YAML Frontmatter (tags, JSON-LD) | Maps content to brand knowledge graph |

| Content Signals | Content-Signal HTTP header | Expresses preferences for AI training |

| In-IDE Preview | Visual Studio 2026/Obsidian | Ensures human-AI parity in data view |

Strategic Implications and Final Conclusions

The shift from Retrieval-Augmented Generation to the LLM Wiki pattern marks a fundamental change in the relationship between humans and their information systems. By delegating the “bookkeeping” of knowledge synthesis, interlinking, and maintenance to AI agents while retaining human-readable markdown files as the source of truth, researchers and enterprises can overcome the limitations of stateless AI interactions. This “compilation” model of knowledge management creates a compounding asset where insights are preserved, contradictions are surfaced, and cross-references are automatically drawn, leading to a deeper and more auditable understanding of complex domains.

For professionals and organizations aiming to implement this paradigm, several critical success factors have been identified. First, the adoption of a “Docs-as-Code” approach ensures that documentation benefits from the rigor of version control and collaborative review. Second, the rigorous definition of a schema through files like CLAUDE.md is essential to maintaining agentic discipline and structural consistency. Third, the integration of visual modeling via Mermaid.js provides a powerful layer of cognitive offloading, ensuring that complex system flows are captured in a version-controlled, text-based format. Finally, the move toward markdown-first architectures aligns internal knowledge management with the broader trends of the agentic web and Generative Engine Optimization, ensuring that organizational expertise remains visible and accurate in an AI-dominated information ecosystem.

Ultimately, the LLM Wiki is more than just a note-taking strategy; it is a blueprint for a stateful, compounding intelligence workforce. As large language models continue to evolve from simple chatbots into autonomous agents capable of multi-step planning and execution, the ability to provide them with a structured, versioned, and persistent “second brain” will be the defining characteristic of high-performance knowledge work. By reimagining the wiki as a compiled codebase of knowledge, we transition from the ephemeral noise of one-off chats to a sustainable, growing architecture of understanding.